Y

ou’ve probably read the following assertion in op-eds or heard it as a refrain at industry conferences, but we think it’s one that bears repeating: misinformation and disinformation will be among the greatest challenges societies face in the 21st century, and likely beyond.

But like climate change, another existential threat, misinformation isn’t some distant science-fiction issue our children will face. It’s here. Now. Challenging organizations as they work to deliver truth to the communities they serve. Undermining trust in public institutions to record lows. Creating polarization not seen in our lifetimes and endangering basic tenets of democracy. Today it’s rogue Facebook groups, in a few years it will be Deepfakes increasingly difficult to discern.

How did things get this way? There’s plenty of quality journalism exploring that question. For now, we’re more interested in unpacking what we can do about misinformation as professional communicators. Because that’s the good news—we are not powerless in the face of this issue. It’s not something we can fix overnight, but there are things every organization can do to help address and mitigate the flow of mis- and disinformation. Here are some of the basics.

1. Structure your message—especially the headline.

The onus is on content producers to ensure that readers—who often only have time to skim headlines—are reading factually accurate information that avoids potentially misleading clickbait. Here’s a great breakdown from Hootsuite on writing effective and compelling headlines that avoid our works click-bait instincts.

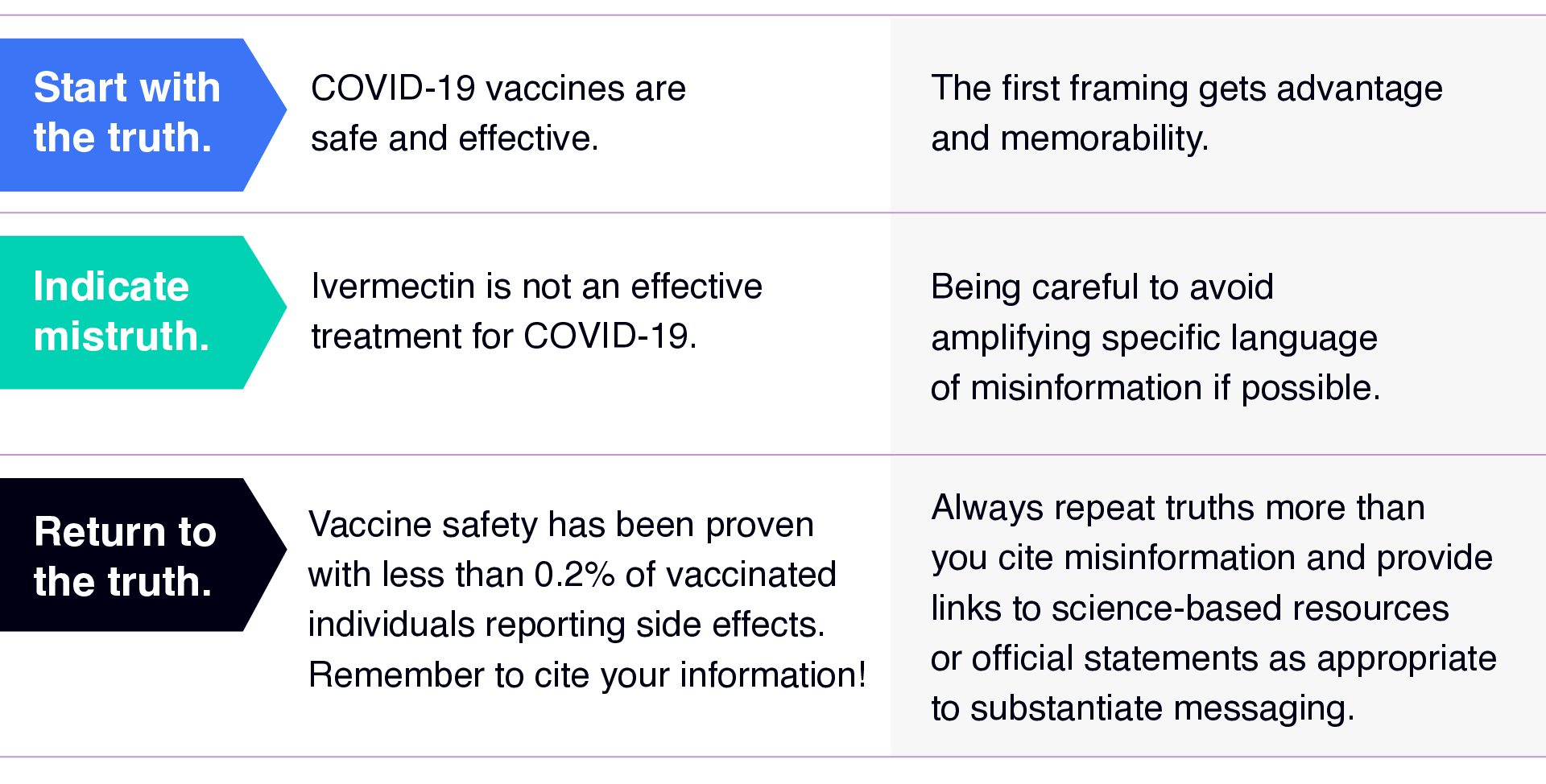

One go-to is the old “truth sandwich.” Let’s take a look at an example:

Nearly every piece of content, no matter how long or complex, can fit this model. It’s a proven structure to reinforce facts in any form of content.

It’s also an idea that appears in journalistic standards, including the PBS Editorial Standards. “Accuracy includes more than simply verifying whether information is correct; facts must be placed in sufficient context based on the nature of the piece to ensure that the public is not misled,” the standards state. “Producers must also be mindful of the language used to frame the facts to avoid deceiving or misleading the audience or encouraging false inferences.”

2. Identify the appropriate role for emotion and storytelling within fact-based content.

Many publishers erroneously believe emotion has no place in fact-based messaging. The truth is, no matter how streamlined facts are, without some level of emotion or human connection built in, a message becomes dry and forgettable. Misinformation and disinformation are often built on emotion (particularly anger and fear) making them memorable even if underlying facts are nonexistent.

That doesn’t mean we need to produce a testimonial for every message, but messaging should always have a human-centered backdrop, tying it in with the real, lived-experience of someone relatable to your audience. Real people are allowed to express emotions—we all have them, and they are often more compelling than facts alone. Embracing facts as part of stories, finding ways to recognize and empathize with the struggles felt by those we’re seeking to reach, is critical to conveying information in blogs, videos, or social posts and more.

This video in partnership with Better Health Together and Spokane Pride features Spokane Pride President, Esteban Herevia, sharing the emotional story of his own lived experience and how it affects his advocacy for others to get vaccinated against COVID-19. It reinforces key facts in an accessible manner for others in his community, while highlighting the personal impacts and taking the viewer on an emotional journey.

3. Respond to misinformation.

First, consider what misinformation needs to be addressed and in what capacity. The last thing we want to do as communicators and organizations working to educate is to give any more oxygen than necessary to nonsense. It distracts from truth and creates uncertainty with “both-sides” bias.

In our post-social media landscape, there will also be inaccurate information posted—oftentimes with good intentions—from everyday individuals. The size of their platform and reach should be considered, along with the severity of the misinformation. A post from Jane Doe to her 30 Twitter followers which states, “masks are not worth wearing to protect against COVID-19 because they are uncomfortable,” does not need to be substantiated with a response. A retweet of your original post by a celebrity or a candidate running for office stating, “masks are ineffective,” likely merits a straightforward, informative correction which links to resources with accessible breakdowns of the science behind masking.

4. Recognize you aren’t in this alone.

It would be easy for any one local health jurisdiction to feel overwhelmed by the crush of misinformation during the COVID-19 pandemic if they were the only organization working to help make communities safer and healthier. But they aren’t alone. There have been countless community-based organizations, private businesses, non-profits and more sharing positive public health messaging during this time. Identifying and activating those partners into messaging coalitions is a critical part of reaching audiences in an era of low trust. We must add voices who are trusted by audiences into the mix, be they faith leaders, cultural cornerstones, Instagram influencers, personal physicians, you name it. Our job is to put in the research to learn who skeptical audiences trust, and work to build partnerships with those organizations and individuals so that they may be the trusted message carriers to deliver the message.

Misinformation is here to stay, but so are we.

Once misinformation is published, it’s typically difficult to remove. Turns out information is a lot like personal health — the best way to maintain both good information and health is prevention. Fighting misinformation is a battle fought one skirmish at a time, not as a whole war. All we can control is the way in which we publish factual content through our owned media channels, and how we respond to inaccurate comments from individuals or organizations.

This problem is going to get tougher as technology like Deepfakes become easier to produce, and as social media algorithms further ensconce audiences in echo chambers from potentially nefarious sources. Communicators can’t solve this problem our own—technology providers must address reforms to their platforms—but we can help shift the culture toward fact-based representations of information as we publish it. We can help inoculate against misinformation with a steady flow of fact-based content that is easy to read, connects to relatable stories and is reinforced by people audiences trust.

We're here to help

If you need help working through communications strategy, media engagement or audience engagement during this time, give us a call. We’re happy to talk strategy and help.